How Volatility Regime Filtration Protects Trading Infrastructure

Markets do not warn you before conditions change. Volatility regimes shift without fanfare, liquidity becomes selective, and execution costs rise faster than signal confidence can adjust. The difference between resilient infrastructure and fragile deployment often comes down to one architectural decision: volatility regime filtration in systematic trading. This discipline determines whether the system can deny itself permission to trade when the environment no longer supports stable execution.

Most systems treat volatility as a parameter to estimate. Institutional infrastructure treats volatility as permission logic. When realized volatility exceeds structural tolerance, or when liquidity depth deteriorates past filtration thresholds, the correct response is not “adjust the model” but “refuse the trade.” That distinction separates behaviour-first engineering from prediction-dependent activity.

Dovest approaches design through structure before prediction, integrity before excitement, and risk before returns. In this article, we examine how volatility regime filtration in systematic trading protects infrastructure by encoding environment permission as a required input rather than an operator judgment call.

Understanding structural protection through regime filtration

Volatility is not a forecast target. It describes how much price can move in compressed time, how quickly gaps appear, and how unreliable recent fills become as predictors of next fills. These properties determine whether execution can occur without structural damage.

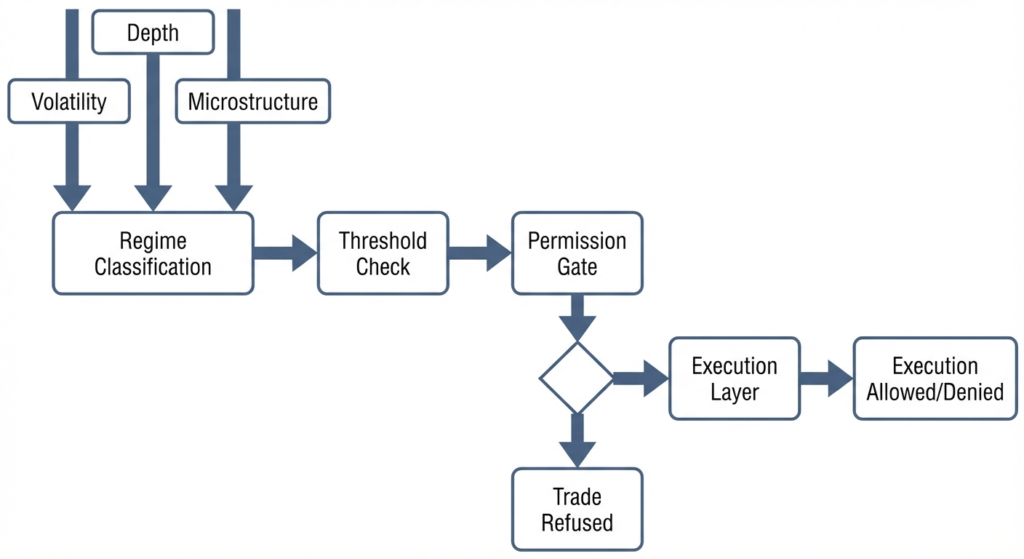

A clean architecture treats regime classification not as a market view but as an environmental input that governs what the system is allowed to attempt. Environmental inputs change the question from “what do we think will happen?” to “what does the environment currently permit?”

Volatility regime filtration begins with observable thresholds

Volatility regime filtration in systematic trading requires measurable boundaries. These boundaries are not “best guesses” about future volatility but observations about current behaviour compared to stability requirements.

Common structural inputs include realized volatility over recent periods, intraday range relative to typical liquidity depth, gap frequency, and auction participation rates. Each metric describes a dimension of execution feasibility rather than directional opportunity.

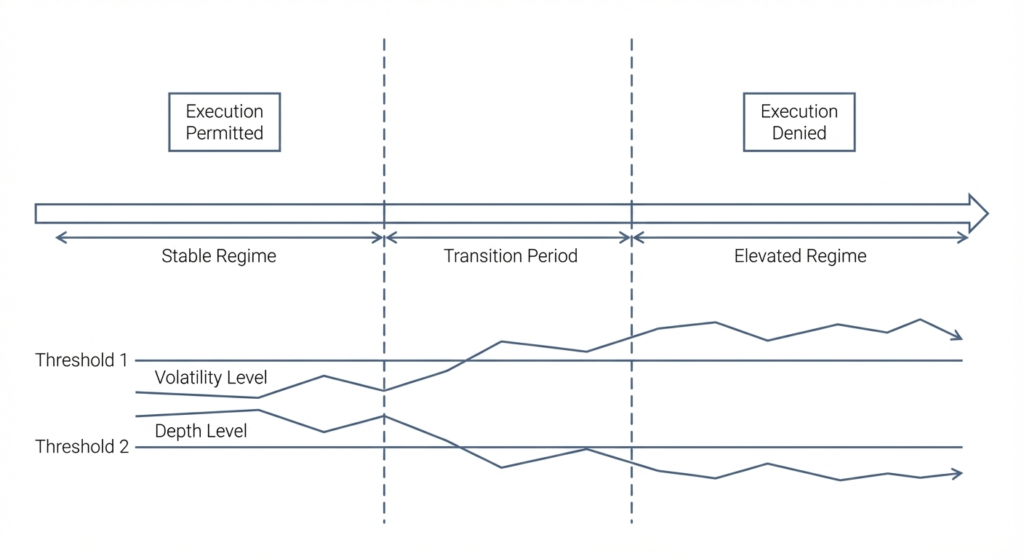

When any threshold is breached, regime filtration denies permission. The signal layer can still observe. The risk layer can still calculate exposure. Execution simply refuses until conditions stabilize. This is not caution—it is constraint enforcement.

Volatility regime filtration prevents execution in deteriorating conditions

Deterioration is gradual until it is not. Spreads widen slightly, then significantly. Depth replenishes more slowly, then sporadically. Queue dynamics shift from predictable to auction-dominated, and these changes often precede larger dislocations.

A system without volatility regime filtration in systematic trading will continue operating through early deterioration because recent fills still “worked.” By the time fills start failing visibly, the environment has already denied stable execution for minutes or hours. Such systems have been trading without permission.

Filtration makes permission explicit. Regime boundaries are encoded as gates that execution must pass before attempting fills. When gates close, the system stops proposing orders regardless of signal strength or risk appetite.

Infrastructure design patterns for regime filtration

Institutional systems must behave consistently in environments that do not cooperate. That consistency is impossible if the system requires human judgment to decide “is this too volatile to trade?”

Implementing volatility regime filtration in systematic trading creates a design pattern: environmental conditions are checked before execution is permitted, not after fills degrade. This approach shifts discretion from operators to architecture.

Volatility regime filtration defines permission as a system input

Permission is frequently treated as implicit. If the system is running, trades are allowed. That assumption creates hidden dependencies on calm markets.

In a filtration-based design, permission is an explicit input derived from regime classification. The system calculates whether current volatility, liquidity depth, and microstructure stability fall within acceptable bounds. If they do not, execution is denied.

This design prevents the common failure mode where systems “try harder” under stress. Increased retries, wider tolerances, and more aggressive order types all represent discretionary escalation. Filtration blocks that path by making refusal the default when conditions deteriorate.

Volatility regime filtration enforces phase-dependent exposure limits

Volatility regimes do not just affect execution. They also determine what exposure is structurally safe. A position that is manageable in low-volatility conditions may become unmanageable when intraday swings exceed liquidity depth.

Phase-dependent limits tie exposure to regime state. When the system detects a regime transition from stable to elevated volatility, exposure limits tighten automatically. The risk layer enforces reduced concentration, shorter holding periods, or lower leverage depending on predefined policy.

Those who want broader context on how environment-based constraints shape system behaviour may find this article on environment-based trading principles useful for understanding how permission becomes a first-class design element.

This is not dynamic allocation based on opportunity. It is structural constraint based on feasibility. The system does not “bet less” because it is uncertain but limits exposure because the environment can no longer guarantee clean exits.

Volatility regime filtration across liquidity depth and microstructure

Volatility and liquidity are not independent. Rising volatility often coincides with reduced depth, wider spreads, and less predictable queue dynamics. A robust filtration layer monitors both dimensions simultaneously.

Research on market microstructure from institutions like the Bank for International Settlements highlights that liquidity provision deteriorates non-linearly during stress, creating execution risk that compounds with volatility. That relationship makes regime classification a multivariate problem, not a single volatility estimate.

Volatility regime filtration monitors depth alongside realized volatility

Realized volatility describes how much price moved. Depth describes how much liquidity exists to absorb orders without moving price further. Both matter for execution feasibility.

A system might observe moderate realized volatility but severely degraded depth. That combination suggests the market is fragile even if recent moves appear contained. Volatility regime filtration in systematic trading should deny execution based on depth alone, regardless of volatility readings.

Conversely, elevated volatility with stable depth may indicate a trending environment where execution remains feasible. Volatility regime filtration in systematic trading must account for that distinction. The goal is not to avoid volatility but to avoid trading when the environment cannot support stable fills.

Volatility regime filtration tracks microstructure stability

Microstructure stability refers to how predictably the market processes orders. Stable microstructure means spreads are consistent, depth replenishes at known rates, and fills occur near expected levels. Unstable microstructure means auction mechanisms dominate, queue positions become unreliable, and slippage becomes path-dependent.

A filtration layer can monitor microstructure through observable proxies: spread variance, time-to-fill distributions, and cancellation rates. When those metrics exceed thresholds, volatility regime filtration in systematic trading revokes execution permission even if volatility appears moderate.

This prevents the system from “learning” execution patterns that only work in cooperative environments. It also reduces the temptation to optimize around implementation artefacts rather than structural feasibility.

Volatility regime filtration through threshold design

Threshold design is where filtration becomes enforceable. Thresholds that are too tight will deny most trades. Thresholds that are too loose provide no protection. The challenge is calibrating boundaries that reflect structural constraints rather than risk appetite.

Volatility regime filtration uses historical context, not predictions

Effective thresholds are derived from historical stability boundaries, not from forecasts about future volatility. Volatility regime filtration in systematic trading asks: “Under what conditions has execution historically remained stable?” not “What volatility do we expect tomorrow?”

A common approach is to define regimes based on percentile rankings of recent realized volatility, depth, and spread behaviour. For example, volatility regime filtration in systematic trading might trigger elevated regime classification when conditions exceed the 75th percentile, while stressed classification occurs above the 90th percentile.

Each classification maps to execution constraints: tighter feasibility checks, lower maximum order sizes, or complete denial of new positions. Volatility regime filtration in systematic trading defines those mappings before deployment, not during stress.

Volatility regime filtration adjusts lookback periods by market phase

Lookback periods determine how much history the system uses to classify current conditions. Shorter lookbacks detect transitions faster but are more sensitive to noise. Longer lookbacks provide stability but lag regime shifts.

A robust design uses adaptive lookbacks. During stable regimes, the system can afford longer lookbacks to filter noise. During transitions, shorter lookbacks allow faster detection of deteriorating conditions and prevent the system from operating on stale regime classifications when the environment changes quickly.

The key is that adaptation is rule-based, not discretionary. Volatility regime filtration in systematic trading does not “decide” to shorten lookbacks because it feels uncertain but shortens lookbacks when predefined volatility acceleration metrics exceed thresholds.

Volatility regime filtration for execution refusal logic

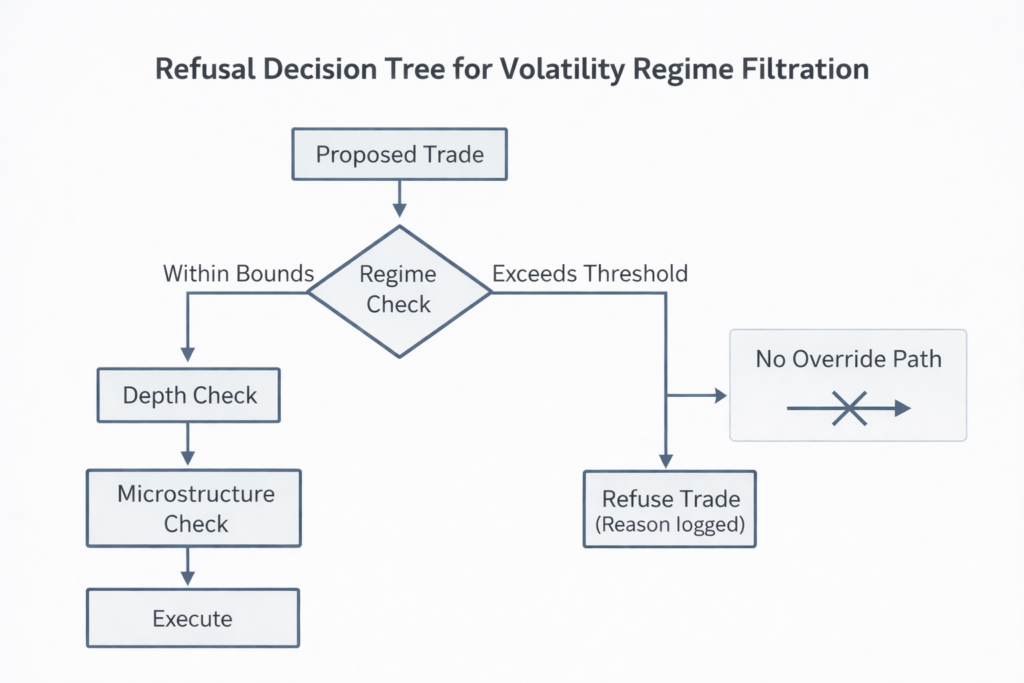

Refusal logic is the practical expression of filtration. It is the code path that says “no” when the environment denies permission. Without robust refusal logic, filtration degrades into warnings that operators can override.

Volatility regime filtration makes refusal a first-class output

Most systems treat refusal as failure. Orders that do not execute are logged as errors or edge cases, and this framing encourages “fixing” refusals through retries, wider tolerances, or manual intervention.

In a filtration-based design, refusal is expected output. Volatility regime filtration in systematic trading is designed to refuse frequently when conditions deteriorate. Refusal is logged with full context: which threshold was breached, what regime classification triggered the denial, and what metrics exceeded bounds.

That context matters for governance. It allows post-analysis to distinguish between structural refusals (environment denied permission) and implementation failures (execution logic malfunctioned). Those are different problems requiring different responses.

Volatility regime filtration prevents discretionary overrides

Discretion is useful for design iteration but destructive during live operation. When operators can override regime filtration “just this once,” the system stops being systematic.

A clean architecture removes override capability from execution pathways. If the regime classification says “deny,” execution denies. There is no escalation ladder or “supervisor approval” mechanism. The system either has permission or it does not.

This may seem rigid, but in practice it prevents the most common form of discipline erosion: treating every refusal as a problem to solve rather than a constraint to respect. Those interested in how pre-commitment rules eliminate real-time discretion may find value in this discussion of pre-commitment rules in trading decisions.

Managing regime transitions

Regime transitions are when filtration matters most. Transitions rarely announce themselves clearly. Instead, friction accumulates: volatility rises gradually, then spikes. Depth thins slowly, then collapses. Auctions become more influential, and gaps become more frequent.

A system without regime filtration will continue operating through early transition signals because recent behaviour still “worked.” By the time the regime shift becomes obvious, the system has already executed trades in an environment that was denying permission for minutes or hours.

Volatility regime filtration detects transitions early

Early detection requires sensitive metrics. A robust approach monitors rate-of-change in volatility, not just absolute levels. A sudden acceleration in realized volatility may indicate a regime shift even if absolute volatility remains below historical highs.

Similarly, depth deterioration rates matter more than absolute depth levels. A market with declining depth is signaling reduced participation, and that signal is actionable before depth falls below minimum thresholds.

Volatility regime filtration in systematic trading uses those signals to tighten constraints preemptively. Execution feasibility checks become stricter. Order size limits decrease. Queue risk tolerances narrow. These changes occur automatically based on rule-based detection, not on operator concern.

Volatility regime filtration enforces cooldown periods after transitions

Even after volatility stabilizes, the environment may not immediately return to normal participation patterns. Depth can remain thin, spreads can stay wide, and microstructure can remain fragile for hours after a spike.

A filtration-based design enforces cooldown periods before restoring normal execution permissions. Regime filtration requires sustained stability—defined by predefined metrics remaining within bounds for a minimum duration—before resuming standard operations.

Research on market volatility and financial stability from the International Monetary Fund demonstrates that market stress often persists beyond the initial shock, with liquidity conditions remaining impaired long after volatility appears to normalize. This finding supports the engineering rationale for cooldown requirements.

Cooldowns are not conservative guessing but structural acknowledgment that regime transitions have momentum. The system waits for confirmation that permission has been restored, not just that the spike has ended.

Observability and diagnostics

Observability is critical when refusals occur. Without clear logs, operators cannot distinguish between appropriate filtration and implementation bugs. A well-designed filtration layer produces diagnostic output that explains every denial.

Volatility regime filtration logs classification state continuously

Classification state should be logged whether or not trades are proposed. Volatility regime filtration in systematic trading creates a timeline of regime changes independent of trading activity. That timeline is essential for post-analysis.

When a refusal occurs, logs should capture: current regime classification, which thresholds were exceeded, input metrics at time of refusal, lookback period used for classification, and time since last regime transition. This context allows reviewers to validate that filtration behaved as designed.

Volatility regime filtration supports replay and testing

Regime filtration is only trustworthy if it can be replayed under historical conditions. The system stores sufficient state to reconstruct classification decisions from archived market data.

This capability supports testing new threshold calibrations before deployment. Volatility regime filtration in systematic trading can replay weeks of historical data, apply proposed threshold changes, and evaluate how many refusals would have occurred. That analysis informs threshold tuning without requiring live experimentation.

Replay also supports governance. When stakeholders question why certain periods saw high refusal rates, replay provides definitive answers based on recorded regime states and threshold breaches.

Handling correlation breakdown events

Correlation breakdown events occur when relationships between instruments or markets that appeared stable suddenly become unreliable. These events often coincide with volatility spikes but can occur independently. Volatility regime filtration in systematic trading must account for both.

Monitoring correlation stability

Correlation stability is a signal about market functioning. When correlations break down, it often indicates that normal market mechanisms are impaired. Liquidity providers may withdraw, execution costs may rise unpredictably, and risk models may become unreliable.

A filtration layer can monitor correlation metrics as inputs to regime classification. When correlation variance exceeds thresholds, volatility regime filtration in systematic trading can elevate regime classification even if realized volatility remains moderate. This prevents trading through correlation breakdowns that appear calm on volatility metrics alone.

Treating breakdown as elevated regime

Correlation breakdown should trigger the same constraints as elevated volatility: tighter execution feasibility checks, reduced order sizes, and potentially complete denial of new positions. Volatility regime filtration in systematic trading does not attempt to “trade through” breakdown events by adjusting models in real time.

This design acknowledges that correlation breakdown is fundamentally a signal about market quality, not about opportunity. When market quality deteriorates, the appropriate response is constraint tightening, not position adjustment.

Integration with risk architecture

Risk architecture must respect regime classifications. A system where risk limits remain static across regimes is exposed to the same failure mode as one without filtration: assuming stable execution conditions regardless of environment state.

Concentration limits informed by regime state

Concentration limits define maximum exposure to any single instrument, sector, or strategy. Those limits should tighten when regime filtration detects elevated or stressed conditions.

A position that represents acceptable concentration during stable regimes may become excessive when volatility rises and liquidity thins. Volatility regime filtration in systematic trading provides the regime state that the risk layer uses to scale concentration limits dynamically.

This is not discretionary risk adjustment but pre-committed policy: “When regime state equals X, concentration limits equal Y.” The rules are defined before stress, not negotiated during stress.

Position reduction protocols

In extreme cases, volatility regime filtration in systematic trading may trigger automated position reduction. If the environment deteriorates past critical thresholds, the system may begin unwinding positions regardless of unrealized profit or loss.

Position reduction protocols are pre-committed responses to environment degradation. They remove the question “should we reduce here?” from real-time decision-making. The system already knows: when regime state exceeds threshold Z, reduce exposure by N%.

These protocols are controversial because they guarantee losses during false alarms. The alternative is worse: maintaining full exposure through regime transitions that deny stable execution, creating uncontrolled losses when forced exits eventually occur.

Conclusion

Volatility regime filtration respects present conditions

Volatility regime filtration is not about predicting the future. It is about respecting the present. When the environment signals that execution cannot occur stably, the correct response is refusal, not retry. When liquidity deteriorates, the disciplined choice is constraint tightening, not model adjustment.

This approach makes discipline enforceable. It removes discretion from real-time decisions. Permission is defined as an explicit input rather than an implicit assumption. Refusal becomes expected output rather than implementation failure.

Building reliable infrastructure through filtration

A system that can deny itself permission when conditions deteriorate is more reliable than one that assumes permission is always granted. That reliability is the foundation of institutional infrastructure. Volatility regime filtration encodes that principle into architecture.

Author: Dovest Research Team — Dovest.trade publishes institutional-style research on behaviour-first systematic trading infrastructure, focusing on market structure, filtration logic, execution constraints, and governance-by-design.

Disclaimer: This article is for educational and research purposes only and does not constitute financial advice, investment advice, or a recommendation to trade any instrument. Trading involves risk, including the risk of loss.